AI Will Never Replace Engineers

📧 Subscribe to JavaScript Insights

Get the latest JavaScript tutorials, career tips, and industry insights delivered to your inbox weekly.

It is 3 AM. A senior engineer at a Series B startup is staring at a codebase that does not work anymore. Three months ago this system shipped features twice as fast as competitors. The team used Claude Code aggressively. Every ticket became a prompt. Every feature shipped within hours of being scoped. Investors loved it. The CEO posted on LinkedIn about how AI tripled their velocity.

Tonight the engineer is reading a function that calls a function that mutates state in a service that nobody on the current team wrote. The function works in isolation. Its tests pass. But when it runs in production with real traffic, the system corrupts data in ways that take days to debug. The engineer is not building anything new tonight. He is trying to understand what was built without him, by something that does not understand systems the way humans do.

This scene is happening in thousands of companies right now. Most of them have not noticed yet. The ones who have noticed are quietly hiring engineers at salaries 30% higher than they were paying a year ago. I see this in my job board every week. The bill for "AI tripled our velocity" is coming due, and it is being paid by humans who can do something AI fundamentally cannot do.

This article is about why the headlines saying AI will replace engineers are wrong, why they will keep being wrong even as AI gets better, and why the engineers who understand this are about to become more valuable than at any point in the history of the profession.

What Software Actually Is and Why Most CEOs Get This Wrong

Software is not text on a screen. Software is not a list of features. Software is not the output of typing keys in an IDE. Software is a system that exists in time, with a memory of why every decision was made, what it depends on, what it was meant to prevent, and what it would break if you changed it.

The text you write is the smallest part. The architecture, the invariants, the assumptions, the historical decisions, the dependencies between components that nobody documented because they seemed obvious in February but are not obvious in October — this is the actual software. The code is just a fingerprint of the system. The system itself lives in the heads of the engineers who built it and the engineers who maintain it.

When CEOs say "AI will write all the code," they are revealing that they think software is the typing part. They are wrong in a way that costs companies tens of millions of dollars when the bills come due. The typing part is the easiest part. The hard part is everything around it. The hard part is what humans do without realizing they are doing it.

Every senior engineer reading this knows what I mean. You have a feeling when you look at a piece of code that something is wrong even though it compiles and the tests pass. You know that adding this feature here will make the next three features harder to build. You know that this abstraction is being used in two ways and the second way will become a bug in six months. You cannot fully articulate why you know these things. You just do. This intuition is the actual job, and it overlaps directly with system design thinking, which I have argued is the one skill AI cannot automate.

Software Entropy and Why It Cannot Be Beaten By Generation Speed

There is a fundamental law in software systems called entropy. Every change to a system, no matter how small, increases its disorder unless conscious design pushes back. This is not a coding style preference. This is a structural property of complex systems. It applies the same way thermodynamic entropy applies to physical systems.

The only thing that prevents software entropy is engineering judgment that constantly resists it. An engineer who notices that this new feature is being added in a way that will create three future bugs and refuses to add it that way. An engineer who sees that the function being requested is similar to one that already exists and refactors both into a single cleaner version. An engineer who deletes 200 lines of code because they realize the whole approach is wrong before adding more on top.

This work is anti-production. It is the engineer choosing not to write code. Choosing to delete. Choosing to say no. Choosing to think for two hours before typing for thirty minutes. Every time this happens, entropy decreases and the system stays maintainable. Every time this is skipped, entropy increases and the system gets harder to change next time.

Now think about what AI does. AI is trained to generate. Every prompt produces output. Every request produces code. The economic model of these tools is built on generation volume. Claude Code does not have a goal called "delete more than you add this week." Cursor does not whisper "this feature should not be built at all." GitHub Copilot does not refuse to autocomplete because the function being written would compromise the architecture.

AI produces. Engineers prevent. These are opposite forces in the same system. And the second force is the rare one. The first one was already abundant before AI. Junior engineers, eager teams, ambitious product managers, panicked CEOs. There has never been a shortage of people generating code. There has always been a shortage of people willing to stop and think and say no.

AI did not solve that shortage. AI made it worse.

Why This Is Not A Problem AI Will Solve By Getting Better

I want to address the obvious objection. Right now AI generates messy code, but in two years it will generate cleaner code, and in five years it will be better than humans at this too. This is the assumption underneath every "AI will replace engineers" prediction. It is wrong, and the reason it is wrong is more fundamental than most people realize.

The problem is not that AI generates bad code. The problem is that AI generates code at all in response to prompts. The act of generation, regardless of quality, is the wrong action when entropy management requires deletion, refactoring, or refusal.

A perfectly capable AI that produces flawless code in response to every prompt is still optimizing for the wrong objective. The engineer's job is to know when to write zero lines, not to write better lines. The engineer's job is to push back on the product manager and say this feature should not exist. The engineer's job is to throw away last month's work because it was based on assumptions that turned out to be wrong.

AI cannot do this because AI does not have the context to know that the request itself is the problem. AI cannot say no to its own user. The economic model would collapse if it did. Imagine paying $20 a month for a tool that responded to your prompts with "this is the wrong question, please reconsider." Nobody would pay for that. So nobody builds it. So AI tools are structurally trained to produce regardless of whether production is the right action.

This is not a limitation that better models fix. This is a structural feature of the tool itself. Generation is the product. Refusal would kill the business. We are paying AI companies billions of dollars to be relentlessly compliant, and compliance is the opposite of what entropy management requires.

The Asymmetry That Most Engineers Have Not Noticed Yet

Here is what is happening in real time and what most engineers have not connected the dots on. AI is good at the parts of engineering that look like production. AI is terrible at the parts that look like restraint, judgment, and refusal. The first kind of work was historically done by junior engineers and the easier half of senior engineers. The second kind was always rare and always undervalued.

What AI is doing is not replacing engineers. AI is revealing which engineers were doing which kind of work. The ones who were essentially generating code with their hands at human speed are being matched by AI tools that generate at machine speed. The ones who were preventing entropy through judgment are not being replaced because there is nothing to replace them with.

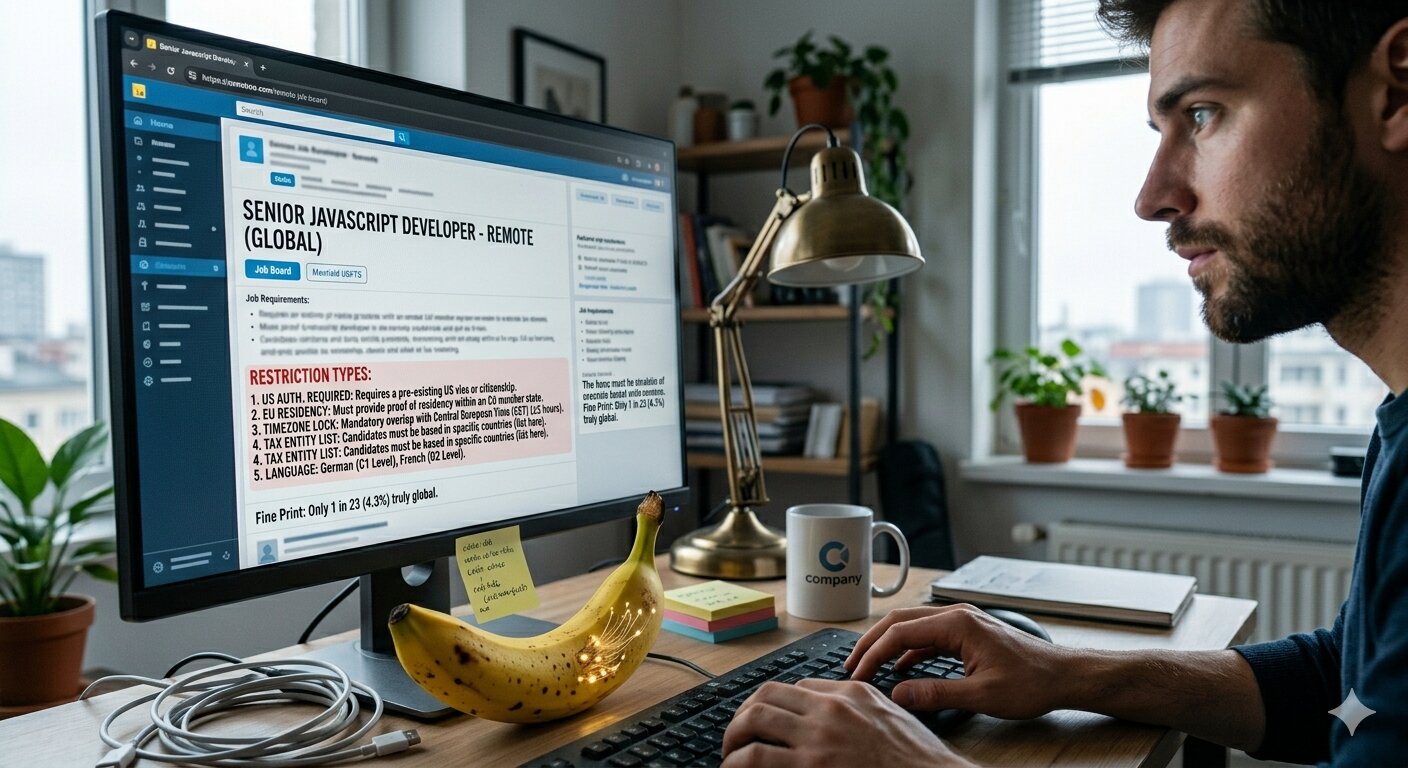

I see this play out in real numbers on my job board. Out of 468 active JavaScript listings right now, 35 explicitly mention refactoring, technical debt, or legacy code work. That is 7.5% of the market. A year ago this percentage was lower. These are not roles created by AI failure. These are roles that always existed but are growing because companies that built fast with AI are now hitting the wall that comes after fast. I wrote about similar patterns in my analysis of 415 JavaScript job postings, and the trend has only accelerated since.

The engineers being hired into these roles are not generalist coders. They are people who can read existing code at speed, understand systems they did not build, decide what to keep and what to delete, and refuse to add things that should not be added. They are paid more than the average senior because there are fewer of them and the work they do is harder to find. Many of them came up through normal development, then specialized in cleanup work because they discovered they were good at it.

I think this is the most important career signal of the next three years and almost nobody is reading it correctly. The path to being indispensable in a world full of AI-generated code is not learning to use AI better. It is learning to do what AI cannot do at all.

What Engineers Should Actually Build As Their Career Skill Now

If I had to advise a developer in 2026 on what to build into their daily practice, knowing what I see in the market, I would say this.

Stop measuring your day by code shipped. Start measuring your day by problems solved without code. The senior engineer of 2027 is going to be the one who can sit in a meeting where everyone is excited about a new feature and say "we should not build this and here is why." Companies will pay premium for that voice because they will have learned, expensively, that they have plenty of generation capacity and a critical shortage of restraint capacity.

Practice deleting code. When you join a project, the first thing you should do is identify code that does not need to exist. The functions called by nothing, the abstractions used in only one place, the configuration options nobody changes. Most teams have 20 to 40 percent dead code that adds maintenance burden and entropy without adding value. Engineers who can find and remove this code make systems more valuable through subtraction. AI cannot do this because AI does not know what is actually being used.

Build deep mental models of systems before you change them. The thing AI is worst at is holding the entire system in its head. Even when you give AI a large context window, it does not understand the system the way a human who has worked on it for six months does. This understanding is your moat. It cannot be transferred. It cannot be prompted. It can only be built, slowly, by working with code over time and remembering why decisions were made. This is exactly the kind of skill that I argue makes you layoff proof in 2026, regardless of what AI tools your company adopts.

Learn to say no diplomatically. The biggest meta-skill of the next five years is being the engineer who can tell a product manager their feature request is wrong without making them feel attacked. Every team has someone who is technically excellent but cannot do this and so gets ignored. Every team needs someone who can do it and gets listened to. Be the second person.

What This Means For The Engineers Who Get It

The headlines will keep saying AI is going to replace engineers. CEOs will keep saying it because their economic interest requires them to say it. The press will keep covering it because fear sells better than nuance. Most engineers will keep panicking about it and either checking out of the profession or trying to compete with AI on production speed. I gave a more detailed answer to this question for junior developers specifically in my honest take on whether AI will replace juniors, and the conclusion there applies to seniors too with one important difference.

A small number of engineers will read what is actually happening, recognize that AI is revealing rather than replacing, and position themselves on the side of the work that AI cannot do. These engineers will become more valuable every year. Their salaries will rise faster than the market average. They will have leverage in interviews because they understand what they offer that cannot be replaced. Companies that built unsustainable AI-generated codebases will compete to hire them at premium rates.

I am not predicting the next two years. I am describing what I see right now in 468 active job listings, in messages I get from developers, and in patterns that anyone running a job board would notice if they were paying attention. The engineers who get this are not waiting to react. They are positioning themselves now.

The CEOs of AI companies have a financial interest in saying "coding is going away." Of course they say that. It sells subscriptions. It justifies their valuations. It moves stock prices. But notice that none of them are actually firing their senior engineers. They are hiring more of them. They know the difference between what AI can do and what engineering actually is. They just cannot say it publicly because their business model depends on you believing the opposite.

Coding is not going away. It is bifurcating. One side is becoming a commodity that AI handles. The other side is becoming a premium service that humans deliver and that companies pay more for every quarter. Choose the right side and your career compounds. Choose the wrong side and you are competing with a machine on its home court.

If you want more observations from inside the JavaScript market in 2026, I publish weekly at jsgurujobs.com.

FAQ

Will AI eventually replace senior engineers as it gets better?

No, and the reason is structural rather than capability-based. Senior engineering work is largely about restraint, judgment, and refusal. Knowing when not to write code is the core skill. AI tools are economically built to generate output in response to prompts. They cannot say no to their own users without breaking their business model. Better AI does not solve this. It is the wrong action regardless of execution quality.

What kinds of engineering jobs are growing most in 2026?

Refactoring, technical debt cleanup, system migration, and legacy code maintenance roles are growing visibly. On my job board these account for 7.5% of active JavaScript listings, up from a lower percentage a year ago. Companies that built fast with AI are now hiring engineers to clean up the resulting mess. These roles often pay 20-30% above average senior salaries because the supply of qualified candidates is limited.

How should developers position themselves to be irreplaceable in an AI-heavy market?

Build skills AI structurally cannot replicate. Learn to read existing code quickly and understand systems you did not build. Practice deleting code rather than adding it. Develop the diplomatic ability to refuse feature requests that would damage the architecture. Hold deep mental models of systems in your head over time. None of this can be prompted, automated, or replaced by faster generation.